ABOUT US

The Cheng Kar-Shun Robotics Institute (CKSRI) is a multidisciplinary platform that supports and facilitates robotics-related research, development and education. With a focus on autonomous systems and robotics research, the Institute integrates innovations in sensors, devices, systems, networks, neurosciences, data analytics and machine learning to further research by faculty and students in a wide range of applications that aim to create societal impact. The Institute also nurtures a network of industry partners, designs entrepreneurship programs and drives knowledge dissemination.

In 2015, HKUST launched its Robotics Institute, a major new multidisciplinary research initiative. The Institute supports the endeavors of faculty and students working on robotics and autonomous systems across the campus.

In June 2021, the Institute was renamed the Cheng Kar-Shun Robotics Institute, following a generous donation from Chow Tai Fook Charity Foundation Limited to HKUST to support academic and research development.

Key Research Areas

AUTONOMOUS FLIGHT

From robotic navigation to robotic vision, autonomous flight research at the HKUST Robotics Institute is taking off to new heights. The Institute has also partnered with industry leader DJI to jointly advance unmanned aerial vehicle (UAV) technology.

MARINE ROBOTICS

Prof. Fumin ZHANG's research interests include mobile sensor networks, maritime robotics, control systems, and theoretical foundations for cyber-physical systems etc..

SMART CONSTRUCTION

The Robotics Institute is combining UAV technology and building information modeling (BIM) technology in the application of smart construction. The UAV-based approach can substantially reduce construction cost and labor.

SMART MANUFACTURING

The Robotics Institute is combining UAV technology and building information modeling (BIM) technology in the application of Smart manufacturing is one of the most important global industries. In particular, assembly, testing and packaging are overly reliant on humans. Research is now being conducted to endow robots with the flexibility and delicacy to perform these roles.

HUMANOID ROBOT

HKUST researchers across disciplines are working to develop robots with ever more powerful “mind”. HKUST is at the forefront of building machines that can learn, act, and sense emotions like their human creators, with far-reaching applications for the future, such as home and service robots.

VISUAL INTELLIGENCE LAB

Prof. Qifeng CHEN focuses on AI solutions for visual computing and content generation. His research interests include image processing and synthesis, 3D vision, and autonomous driving.

ROBOTIC MANIPULATION

A novel solution, Flipbot, for singulating and grasping thin and flexible deformable objects that utilize the cross-sensory encoding of exteroceptive and proprioceptive perceptions.

FLEXIBLE ELECTRONICS

Prof. Hongyu YU focuses on designing and fabricating origami or metastructure-enabled flexible devices and electronics in all macro-scale, micro-scale, and nano-scale levels.

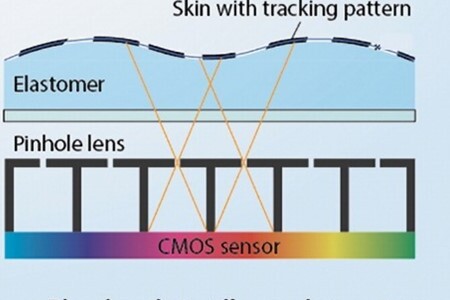

SOFT ROBOTICS

Prof. Hongyu YU's group develop a novel optical-based tactile sensor with design, fabrication, characterization, and testing experiments. An effective signal processing algorithms is developed for output.

SMART SENSORS

Inertial sensors (e.g. accelerometer) are widely used in consumer electronics (e.g. smartphones), aerospace (e.g. drones), automotive (e.g. airbag ejection) and other industries.

MINIATURE ROBOTIC SYSTEM

Prof. SHEN Yajing has developed a tiny, soft robot with caterpillar-like legs capable of carrying heavy loads and adapting to a variety of environments inside the body. Small, soft robots are gaining attention around the world for their potential uses in biomedicine.

AUTONOMOUS DRIVING

Integrating 3D perception, cloud robotic systems and LiDAR technology, autonomous driving research at HKUST has resulted in Hong Kong’s first driverless car.